Liquibot - Autonomous Bartending Robot

Project Aim

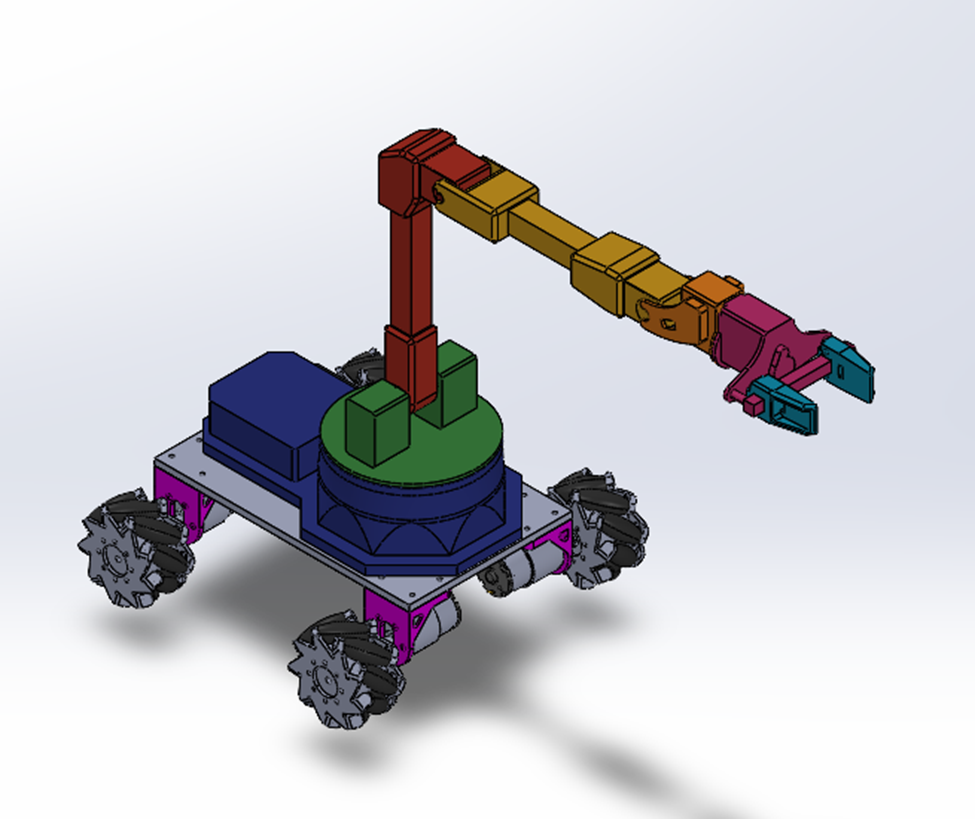

To design and develop an autonomous mobile platform integrating wheeled locomotion, robotic arm manipulation, and vision-based localization for automated bartending operations including container grasping, transport, and shaking motions.

Description

Liquibot represents an integrated approach to autonomous mobile manipulation, specifically targeting bartending applications requiring coordinated locomotion and precise object handling. The platform combines custom mechanical design, multi-modal sensing, and intelligent control architectures to achieve reliable pick-and-place operations in structured environments. The mechanical foundation features a scratch-built chassis integrating structural components, motor systems, and electronics mounting in a compact form factor. The wheeled locomotion system employs remote control via Dabble's gamepad interface, connecting smartphones through Bluetooth to translate user inputs into PWM signals controlling individual wheel speed and direction. While current velocity control faces limitations due to absent encoders, the system successfully navigates to predetermined locations for manipulation tasks.

The custom-designed gripper accommodates cylindrical containers and glasses, providing reliable grasping across varied object geometries despite the system's 9lb operational weight. Robotic arm control leverages ROS through Python API, enabling position-specific trajectory generation for complete pick-and-place cycles. Predefined trajectories guide the arm from home position to container pickup, transport to designated drop-off locations, and return sequences, demonstrating coordinated manipulation capabilities essential for bartending operations. Perception and localization systems integrate ORB-SLAM 3 with AprilTag detection for precise spatial referencing. A webcam interfaced through ROS 2 camera driver nodes publishes raw image frames processed by AprilTag detection algorithms, enabling pose estimation relative to the camera frame. AprilTags serve as spatial markers identifying placement locations, ensuring accurate positioning during manipulation tasks.

Communication architecture employs Micro-ROS with ESP32 configured as client, while Raspberry Pi 4 publishes webcam feeds over WiFi for remote monitoring and control. Future development focuses on pre-mapped environment operation with AprilTag-guided trajectory generation, implementation of shaking motion profiles mimicking bartending actions, slosh control techniques preventing liquid spillage, and custom lightweight shaker design accommodating robotic arm payload constraints. These enhancements will elevate Liquibot from demonstration platform to practical autonomous bartending system capable of complex drink preparation sequences.